The Loop That Builds Itself

The big pattern in personal AI isn't one tool. It's a loop. Ask the AI how it could get better. Make the fix. Ask again. The wins stack up faster than you think.

Where this started

I've been building personal AI infrastructure for over a year now. Early on, I treated the AI the way most people do. I gave it tasks. It did them. Sometimes well, sometimes not. I'd fix what was broken and move on.

Then I started asking a different question. Instead of "do this thing," I started asking "what would make you better at doing this thing?"

That sounds easy. It is easy. But run that question on a loop, day after day, for months, and it stops being easy. It builds on itself. Each fix makes the next answer better. Each gap the AI finds and fills gives it more to work with. So it finds the next gap.

The pentesting mindset

My background is ethical hacking. The work loops back on itself. You find every bit of info you can. You act on it. Then you find more. Then you go back and redo all the old steps. You have to. You know more now.

That's the same loop. Map, find, build, test. The difference with AI is that the system itself participates in the mapping. It can tell you where it failed. It can log its own mistakes. It can look at a week's worth of its own activity and say "here's a pattern, here's what keeps going wrong, here's what I'd need to fix it."

I didn't plan this from the start. It grew out of the same instinct that drives any good security assessment. You don't just test once and call it done. You keep going. You keep asking. You assume there's always another gap.

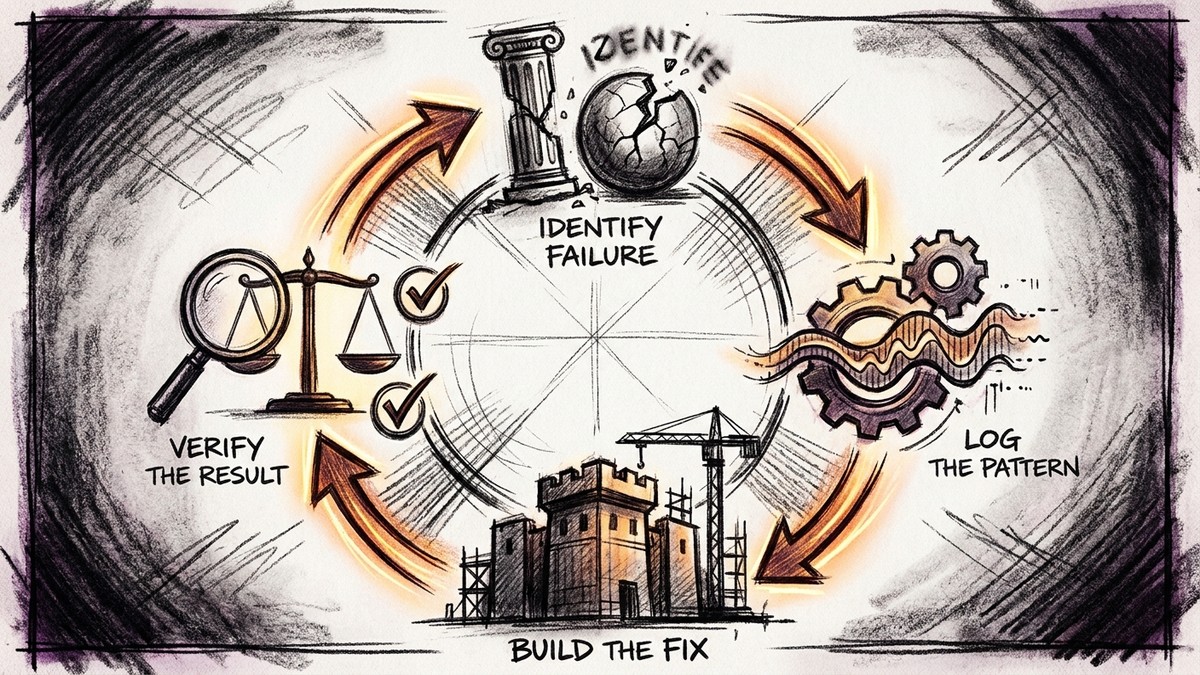

What the loop actually looks like

Let me get real for a sec. My AI catches its own slip-ups on its own. When it messes up, it logs what broke, why it broke, and what rule would stop it next time. I read those logs each week. I pull out the patterns. I write new rules.

The AI also tracks its own gaps. When it hits a task it can't do well, it flags it. Not in a vague way. It writes down what skill is missing. It writes why that skill matters. It writes what it would take to build it. Those notes turn into my build queue.

It also pitches new tools. It watches how I work. It knows where my time goes. When it spots a manual step it could automate, it tells me. When it sees a pattern that shows up in many tasks, it tells me. I never asked it to do this. It came from running the loop long enough. The AI had enough context to act on its own.

Why most people never get here

This loop is rare because it needs memory and context. You need an AI that remembers. Not for one chat. Across weeks and months. It has to know your work. It has to know your goals. It has to know your past calls. Without that, its self-check means nothing.

If you're starting from zero every time you open a chat window, the AI can't tell you what it's bad at. It doesn't know. It has no history to look back on. No failures to reference. No patterns to spot.

The loop only works when the system has depth. And building that depth takes time. There's no shortcut. You run the loop, add context, run it again. Month after month. The first week is barely useful. The first month starts to show promise. After a year, you're working with something that genuinely knows your blind spots better than you do.

It's not sentient. It's systematic.

I want to be clear on what this is. The AI is not thinking about itself. It is not aware. It is not alive. It runs a process. It logs failures. It finds patterns. It suggests fixes. That is all it does.

That is what makes it strong. It does not get bored. It does not skip steps. It does not forget to log a thing because it got busy. It runs the same steps each time. The output gets better because the input gets richer.

What makes a good pen tester is what makes a good self-improving AI. Not creativity. Not gut feel. Discipline. The will to map the surface over and over. Each pass shows you what the last one missed.

The real discovery is the loop

People ask me what I built. They want to know the tools. They want the agents. They want the features. Those matter. But the real thing we found here is the loop. It's the habit of asking the system how to make it better. And the setup to act on the answers.

Everything else grows from that. The tools, the agents, the workflows, the skills. They're all outputs of the loop. The loop is the input. And once it's running, it doesn't stop. It just keeps finding the next thing to build.

If you're building personal AI and you're not running this loop yet, start. Ask your AI what it's bad at. Ask it what context it wishes it had. Ask it what it would build if it could. Then build it. Then ask again.

That's the whole thing. It's a loop that builds itself.

← Back to all posts