What actually happened

My wife mentioned the rep had stopped by. I asked her about it. She said I could review the doorbell recording if I wanted. So I sent my agent to review the recording for me, and it came back with a whole report.

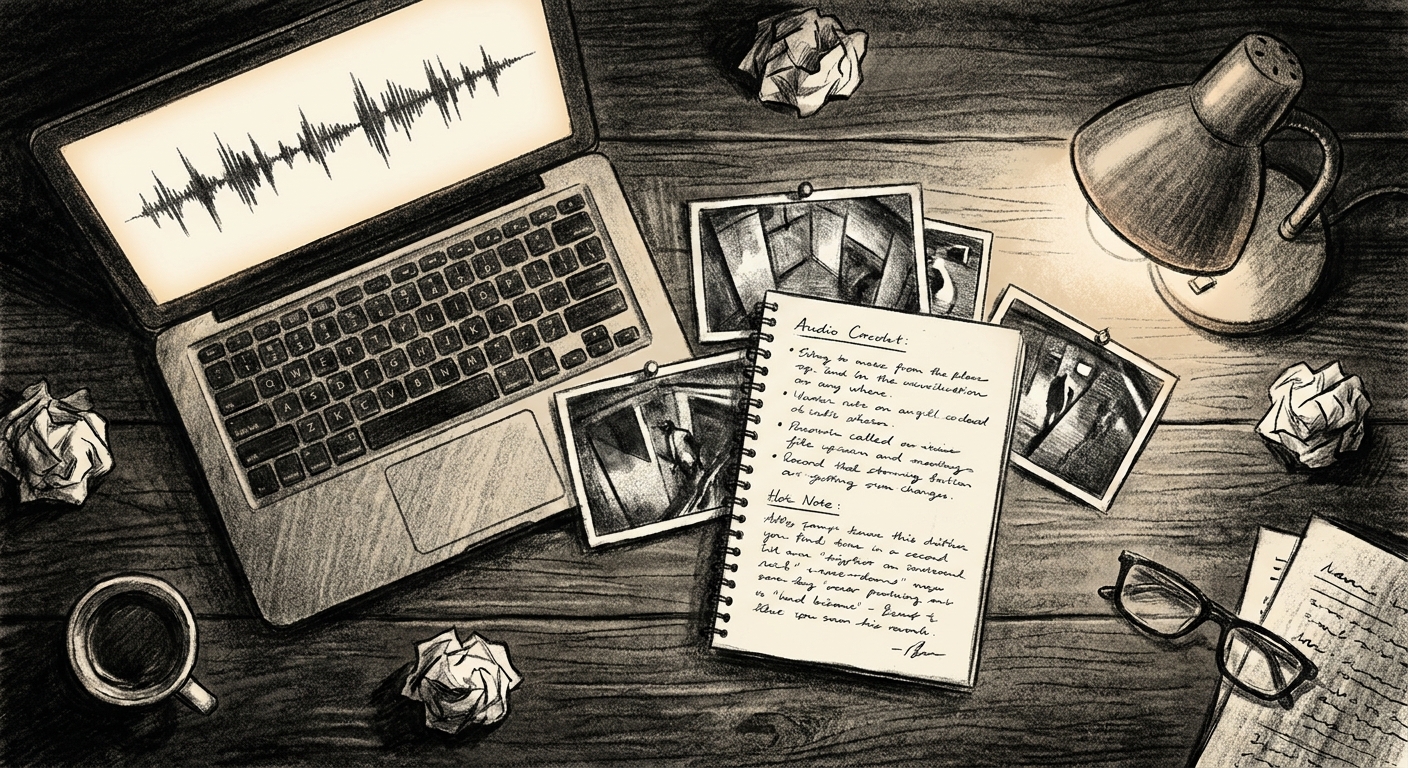

It authenticated to my UDM controller using credentials I'd already wired into its environment. It listed the cameras, found the doorbell, queried the events API for ring activations in the right window, found the exact event at 5:03:07 PM. It downloaded the 5-minute clip. It split out the audio and ran it through Whisper. It pulled six still frames from the video at strategic timestamps. It read the lowercase wordmark embroidered on the rep's jacket and matched it to the marketing pamphlet in his hand. It searched the web for complaint patterns about the company brand and surfaced multiple BBB and consumer-review sources documenting their door-to-door practices. It told me the rep had no business card on him, which is a real-world sales red flag. It walked me through what to do if anyone in the house had signed anything on his tablet, including the Ontario direct-sales 10-day cooling-off window.

One sentence in. Sixty seconds out.

What this is not

This is not a flex about how cool my setup is. It is not a "look how much I can automate" post. The point is much more boring than that, and much more important.

The point is that the gap between "I have a vague concern" and "I have a complete answer with evidence" used to be hours, sometimes days. You'd watch the clip on your phone. You'd squint at the badge. You'd Google the company name. You'd skim BBB. You'd ask a neighbour. You'd half-remember what the guy said. You'd guess.

That gap is now seconds, if your tools are wired up. And if your tools are not wired up, that gap is still hours and you live with the guess.

What was actually wired up

Nothing exotic. The pieces are all things a normal small-business operator either already has or can have for the cost of attention.

- A UniFi Dream Machine running Protect with a doorbell camera. Off-the-shelf hardware, one-time purchase.

- An AI assistant with API credentials for the things I want it to touch. The doorbell. The transcription model. A web search. Vision on the still frames.

- An agent that, given those credentials, reviews the documentation, figures out how to interact with each service, and does what I ask it to do.

Not a stack. Not a framework. Not a "platform." Credentials, an agent, and trust that it knows how to use them.

The lesson hidden in this story

Most people who read about AI think the value lives in the model. The latest one, the biggest one, the smartest one. So they wait. They watch benchmarks. They argue about which lab is ahead.

The value did not live in the model today. The model that transcribed the audio is two years old. The model that read the jacket wordmark is a year old. The vision model is a year old. The model that wrote this post is from this week, but it could have been a year old too.

The value lived in the fact that the audio file existed, the keys were in the right place, the cameras were on the right network, and the AI was configured to figure out how to use the services available to it. I'd double-checked it could. So when I asked, it worked.

The wiring is the bottleneck. Not the model.

What I want you to take from this

If you have a setup at home or at a small business and you are wondering "would AI actually help me," here is the test. Pick one boring, recurring concern. The doorbell. The inbox. The accounts receivable spreadsheet. The thing that comes up every week and steals fifteen minutes from you.

Now ask: what would it take to wire that one thing up so I could ask about it in plain English and get a real answer with evidence?

Almost always, the answer is a couple of credentials, a small piece of glue code, and someone who has done this before. The model itself is the easiest part of the stack now. The wiring is the hard part. Once the wiring is there, the doorbell question gets answered in sixty seconds. So does the inbox question. So does the spreadsheet question.

That is what "using AI productively" actually looks like in practice. Not autonomy. Not magic. A wired-up box that, when you ask it something boring and specific, gives you back the truth.

The salesman left at 5:08. By 5:09 I knew more about him than he knew I knew, and I had already moved on with my evening.

That is the bar. Anything less is not the AI. It is the wiring.