The Vector Database I Almost Built

Every AI lab is selling you a vector memory layer. Most of them solve a problem you don't have, and introduce one you didn't want.

A few months ago I sat down to add a vector database to our memory system. I had the reasoning ready. Embeddings are cheap now. Semantic search is better than grep. Every serious AI stack has a vector layer. Pinecone, Chroma, Weaviate, Qdrant, take your pick. The pattern is everywhere.

I almost did it.

Then I thought about it for ten more minutes and didn't.

Why I stopped

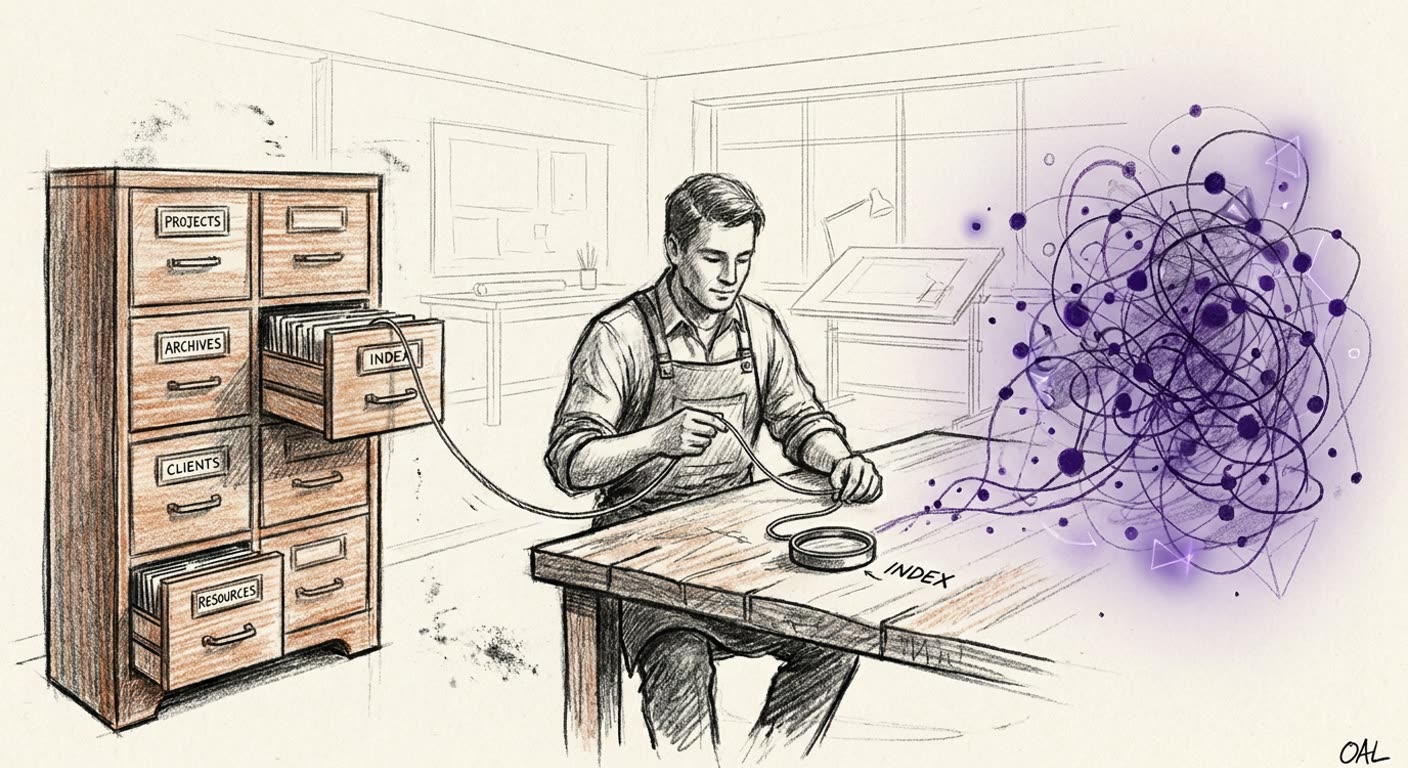

Our memory is a tree of flat files. Markdown notes, JSON logs, plain text transcripts, organized by topic in a directory called MEMORY. Every agent on every host can grep across it in milliseconds. Every tool we've ever built reads from it and writes to it in the same way. Git tracks every change. Backups are just rsync. If something breaks, I can open a file and read it.

The thing that nags me about a vector database is that it would become a second system of record. Two stores. Two things that have to stay in sync. The flat files would still be the truth, but the vector index would be the thing the agents actually query first. And the moment the index falls out of sync, you get an agent confidently citing notes that don't exist anymore, or missing notes that do.

I've been burned by that pattern before. Twice. Both times the symptom was the same. An agent quoted something with conviction and the quote was real two weeks ago, before the source moved or got rewritten. The index didn't know.

What I'd do instead

The right way to add semantic search isn't to replace the file system. It's to layer on top of it.

A SQLite database with full text search built in. Maybe a small vector extension if I really want embeddings. Generated as a derived view from the flat files. Rebuilt automatically when files change. Always rebuildable from scratch. If the index ever lies to me, I blow it away and regenerate it. The truth stays where it always was. In the files.

That's the architecture I'd actually trust. Not because it's clever. Because it makes one source of truth and one accelerator, instead of two stores that argue.

When I'll actually build it

When grep starts hurting.

Right now there are about two hundred active files in our memory tree. Grep is instant. Agents find what they need by name or by topic. The system works.

At two thousand files it'll be slower. At ten thousand it'll be a real bottleneck. That's where the index earns its keep. Until then, building it now would be solving a problem I don't have, with a pattern that introduces the failure modes I most want to avoid.

There's a thing engineers do where we build infrastructure we don't yet need because the pattern is everywhere and it feels professional. That's also how you end up with three caches, two queues, a service mesh, and a bug nobody can find.

The wider point

Every AI lab right now is selling you the same thing. A vectorized memory layer. A graph of your data. A retrieval system that "remembers everything." Most of them are good ideas that don't apply to your situation. Most of them are good ideas that introduce a sync problem you didn't have.

If your data fits in grep, you don't need a vector database yet. You need to do the work of writing things down clearly, naming them well, and putting them where you can find them. That part doesn't get any easier when you add embeddings on top.

I'll add the index when grep stops being enough. I won't add it because Andreessen Horowitz said I should.

References:

- SQLite FTS5 full text search

- sqlite-vss vector extension

- Simon Willison on local vector search

- Why I don't use embeddings for everything (Hamel Husain)